Abstract

Background

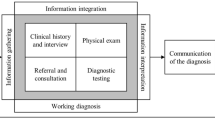

Differential diagnosis (DDX) generators are computer programs that generate a DDX based on various clinical data.

Objective

We identified evaluation criteria through consensus, applied these criteria to describe the features of DDX generators, and tested performance using cases from the New England Journal of Medicine (NEJM©) and the Medical Knowledge Self Assessment Program (MKSAP©).

Methods

We first identified evaluation criteria by consensus. Then we performed Google® and Pubmed searches to identify DDX generators. To be included, DDX generators had to do the following: generate a list of potential diagnoses rather than text or article references; rank or indicate critical diagnoses that need to be considered or eliminated; accept at least two signs, symptoms or disease characteristics; provide the ability to compare the clinical presentations of diagnoses; and provide diagnoses in general medicine. The evaluation criteria were then applied to the included DDX generators. Lastly, the performance of the DDX generators was tested with findings from 20 test cases. Each case performance was scored one through five, with a score of five indicating presence of the exact diagnosis. Mean scores and confidence intervals were calculated.

Key Results

Twenty three programs were initially identified and four met the inclusion criteria. These four programs were evaluated using the consensus criteria, which included the following: input method; mobile access; filtering and refinement; lab values, medications, and geography as diagnostic factors; evidence based medicine (EBM) content; references; and drug information content source. The mean scores (95% Confidence Interval) from performance testing on a five-point scale were Isabel© 3.45 (2.53, 4.37), DxPlain® 3.45 (2.63–4.27), Diagnosis Pro® 2.65 (1.75–3.55) and PEPID™ 1.70 (0.71–2.69). The number of exact matches paralleled the mean score finding.

Conclusions

Consensus criteria for DDX generator evaluation were developed. Application of these criteria as well as performance testing supports the use of DxPlain® and Isabel© over the other currently available DDX generators.

Similar content being viewed by others

REFERENCES

CRICO Harvard Risk Management Foundation. High Risk Areas: 26% of claims are in the category of diagnosis. Accessed May 30th, 2011, at http://www.rmf.harvard.edu/high-risk-areas.

Brown TW, McCarthy ML, Kelen GD, Levy F. An epidemiologic study of closed emergency department malpractice claims in a national database of physician malpractice insurers. Acad Emerg Med. 2010;17(5):553–560.

Croskerry P. Clinical cognition and diagnostic error: applications of a dual process model of reasoning Advances In Health Sciences Education. Theory And Practice. 2009;14(Suppl 1):27–35.

Schiff, G. D., Kim, S., Abrams, R., Cosby, K., Lambert, B. L., Elstein, A. S., Hasler, S., et al., Diagnosing Diagnosis Errors: Lessons from a Multi-institutional Collaborative Project. Advances in Patient Safety: From Research to Implementation. Volumes 2, AHRQ Publication Nos. 050021 (Vols 1–4). February 2005. Agency for Healthcare Research and Quality, Rockville, MD. Accessed May 30, 2011, at http://www.ahrq.gov/qual/advances/.

Schiff GD, Bates DW. Can Electronic Clinical Documentation Help Prevent Diagnostic Errors? N Engl J Med. 2010;362(12):1066–1069.

Barnett GO, Cimino JJ, Hupp JA, Hoffer EP. DXplain An evolving diagnostic decision-support system. JAMA. 1987;258(1):67–74.

Berner ES, Webster GD, Shugerman AA, Jackson JR, Algina J, Baker AL, Ball EV, et al. Performance of four computer-based diagnostic systems. N Engl J Med. 1994;330(25):1792–1796.

Kassirer JP. A report card on computer-assisted diagnosis–the grade: C. N Engl J Med. 1994;330(25):1824–1825.

Graber ML, Mathew A. Performance of a web-based clinical diagnosis support system for internists. J Gen Intern Med. 2008;23(Suppl 1):37–40.

Ramnarayan P, Cronje N, Brown R, Negus R, Coode B, Moss P, Hassan T, et al. Validation of a diagnostic reminder system in emergency medicine: a multi-centre study. Emerg Med J. 2007;24(9):619–624.

Musen, M. A., Shahar, Y. and Shortliffe, E. H., Clinical Decision Support Systems, In: Shortliffe, E. H. and Cimino, J. J. (eds), Biomedical Informatics: Computer Applications in Health Care and New York: Springer, 2006, pp. 698–736.

Wyatt, J. and Spiegelhalter, D., Field trials of medical decision-aids: potential problems and solutions. , Proceedings of the Annual Symposium on Computer Application in Medical Care, 1991, pp. 3–7.

Kassirer J, Wong J, Kopelman R. Learning Clinical Reasoning. Philadelphia: Lippincott Williams and Wilkins; 2009.

Datena, S., Lifecom DARES Approach to Problem Based Learning and Improvement (Personal Communication of Unpublished White Paper describing the Lifecom DARES System), 2010.

Bowen JL. Educational strategies to promote clinical diagnostic reasoning. N Engl J Med. 2006;355(21):2217–2225.

Wolpaw T, Papp KK, Bordage G. Using SNAPPS to facilitate the expression of clinical reasoning and uncertainties: a randomized comparison group trial. Acad Med. 2009;84(4):517–524.

O'Malley PG, Kroenke K, Ritter J, Dy N, Pangaro L. What learners and teachers value most in ambulatory educational encounters: a prospective, qualitative study. Acad Med. 1999;74(2):186–191.

Graber ML, Tompkins D, Holland JJ. Resources medical students use to derive a differential diagnosis. Med Teach. 2009;31(6):522–527.

Contributors

The authors would like to thank medical students Genine Siciliano, Agnes Nambiro, Grace Garey, and Mary Lou Glazer for entering the findings into the DDX generators for testing.

Sponsors: This study was not funded by an external sponsor.

Presentations: This information was presented in poster format at the Diagnostic Error in Medicine Conference 2010, Toronto, Canada.

Conflict of Interest

None disclosed.

Author information

Authors and Affiliations

Corresponding author

Electronic supplementary material

Below is the link to the electronic supplementary material.

Appendix 1

Search Criteria for finding DDX Programs. (DOCX 10 kb)

Appendix 2

Cases Used for Testing the DDX Generator. (DOCX 13 kb)

Appendix 3

Excluded Programs. (DOCX 12 kb)

Rights and permissions

About this article

Cite this article

Bond, W.F., Schwartz, L.M., Weaver, K.R. et al. Differential Diagnosis Generators: an Evaluation of Currently Available Computer Programs. J GEN INTERN MED 27, 213–219 (2012). https://doi.org/10.1007/s11606-011-1804-8

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11606-011-1804-8